#004 Navigating the IaC Minefield

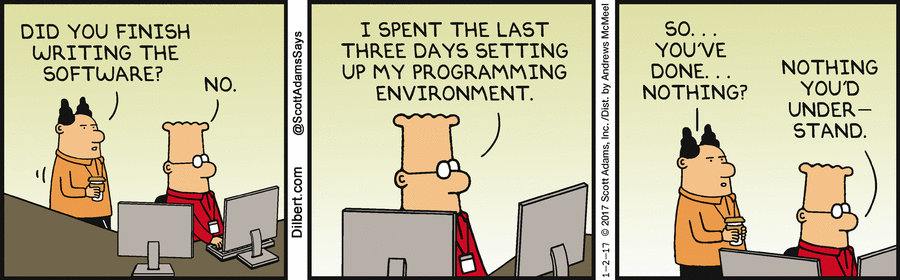

What started as a simple blog deployment has evolved into something much more powerful. Today, I’m documenting the transition to a full Infrastructure as Code (IaC) stack using Terraform to manage my DNS (Porkbun), my Reverse Proxy (Traefik), my CMS (Ghost), and my Identity Provider (Authentik). As a Manager in my day-to-day job, I have encountered many times the problem of justifying IaC to my non-technical leadership, which is summed up below. Anyway, any MVP should include IaC, or be ready to spend 10-100 times that effort later when upscaling.

While Terraform promises a "one-click" reality, the journey there was paved with specific technical hurdles that required a deep dive into the guts of Docker and Traefik.

1. The Traefik v3 "Version Handshake"

The first hurdle was the silent failure of the reverse proxy. Modern Linux distros (like my Debian 13) ship with Docker Engine v27+, which uses API v1.44.

I initially tried running Traefik v3.1, but it defaulted to an older API client. This resulted in a cryptic "client version is too old" error that prevented Traefik from even seeing my containers. The breakthrough came with Traefik v3.6, which introduced Auto-Negotiation. By upgrading the image and removing hardcoded version flags, Traefik was finally able to "shake hands" with the Docker daemon.

2. The Ghost SQLite "String" Mystery

Ghost 5.x is designed for MySQL in production. When I tried to run it with the lightweight SQLite driver via Terraform, it repeatedly crashed with a TypeError: String expected.

This turned out to be a nuance in how Ghost parses environment variables. In a manual setup, you might get away with loose config, but in a declarative Terraform setup, the internal migrator (knex-migrator) is incredibly picky. I had to explicitly define the database__connection__filename using double-underscores to represent nested JSON keys. Without that exact string path, the container would enter a "CrashLoopBackOff" state.

3. The SSH "Killed" Signal & Resource Spikes

Perhaps the most frustrating challenge was Terraform losing its connection to the server mid-apply. Authentik is a powerful suite, but its initial startup—where it runs dozens of database migrations and spawns Gunicorn workers—is resource-intensive.

Even with 8GB of RAM, the sudden CPU spike during the "Handshake" phase would occasionally cause the kernel to deprioritize the SSH tunnel Terraform used to talk to the Docker socket. I saw the dreaded signal: killed error multiple times. I solved this by:

- Adding

ServerAliveIntervalto the Docker provider to keep the tunnel "warm." - Learning that a "Killed" error in Terraform doesn't always mean a failed deployment; often, the server finishes the job, and I just needed a

terraform apply -refresh-onlyto sync the state back to my Mac.

4. The Docker Socket Permissions (The "Silent 404")

Finally, there was the "Silent 404." Traefik was running, the blog was running, but Traefik’s dashboard was empty.

In Debian 13, security is tight. Even though I mounted /var/run/docker.sock, the Traefik process inside the container didn't have the permissions to read it. Unlike a manual sudo docker run, Terraform applies the configuration exactly as written. I had to identify the Docker Group ID on my host (using getent group docker) and pass that GID into the Terraform resource using user = "0:990". Only then could the "Traffic Cop" finally see the rest of the containers.

A path for Disaster Recovery

If my VPS provider disappears today, I simply:

- Spin up a fresh Debian 13 VPS.

- Update the

hostIP in myproviders.tf. - Run

terraform apply.

Within minutes, Terraform talks to Porkbun to update the DNS, connects to the new server via SSH, pulls the exact Docker images (Traefik v3.6, Ghost v5-alpine, Authentik 2024.12), and re-establishes the proxy network.

Infrastructure as Code manages the Infra, but my backups manage the Data. By keeping my host paths consistent (e.g., /opt/containers/blog/content), I can simply rsync my backup folder to the new server before running the apply. Terraform then "plugs" those existing data volumes into the new containers, and the blog comes back online exactly where I left off.

Closing the Loop

These challenges underscore why IaC is so valuable. Once these "gotchas" were solved in the code, they were solved forever. Building this has been a masterclass in modern DevOps. I’ve moved away from the "snowflake server" (where everything is unique and fragile) to a disposable infrastructure model.

If you're still managing your homelab with manual docker-compose files and Portainer, I highly recommend making the jump to Terraform. It’s a steeper climb, especially when fighting with Docker socket permissions or SSH timeouts, but the view from the top—knowing your entire digital world is defined in a few .tf files—is absolutely worth it.